How do you turn time with your Subject Matter Expert into a long term asset?

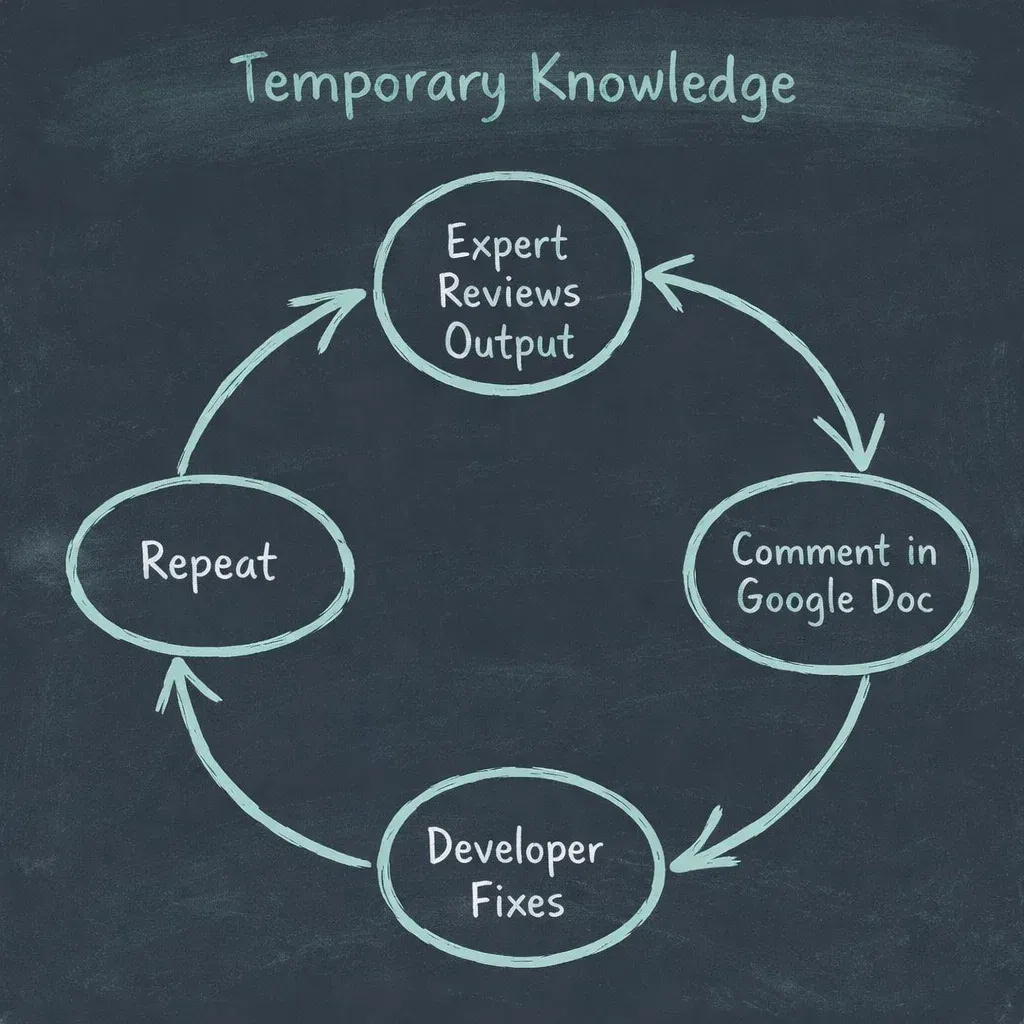

Here is a situation that comes up more than it should.

A company has an AI workflow running in production. Maybe it’s extracting information from construction defect reports, or answering client questions from a structured knowledge base.

The output isn’t quite right yet, so they bring in a subject matter expert. The expert leaves comments in a shared doc, and a developer spends a week fixing things based on those comments.

Then they do it again the following week.

For most startups, this cycle time is a problem.

One to two weeks between iterations is too slow if you’re trying to move quickly. But the cycle time is actually a symptom of the real issue, not the issue itself.

The real issue is that nothing is being captured.

Every time that subject matter expert sits down and reviews the output, they’re generating knowledge. They know what good looks like. They know when a citation is wrong, when a question is missing, when the structure of a response is off.

That knowledge gets burned into a Google Doc comment and then it’s gone. When the expert is no longer available, either because the budget dries up or the relationship ends, you’re back to square one.

This is the thing developers coming from traditional software tend to underestimate.

In deterministic software, you write a test, it passes or fails, and it lives forever. You don't need the person who wrote the original spec to show up every week to check whether the code still works. The test captures their knowledge.

AI systems don't behave that way. The same input doesn’t reliably produce the same output. You can't write a unit test that checks whether an AI-generated defect assessment is correct the same way you'd test a calculation.

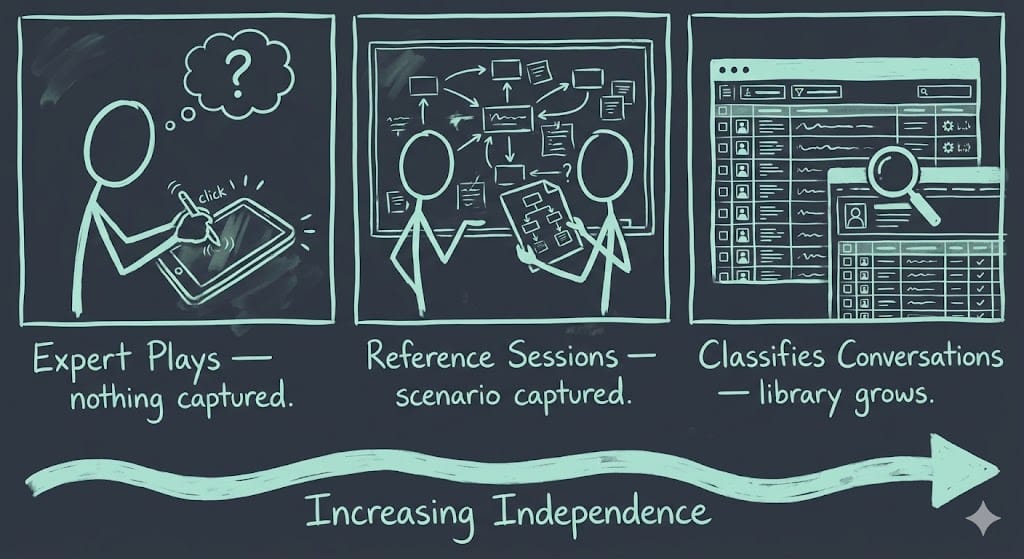

Most teams are using their expert in the weakest possible way.

The expert plays around with the system, gives feedback, the developer iterates.

This is fine for new projects. It’s fast, it takes thirty to sixty minutes per session, and it gets you to a working baseline without needing any real customer data.

But every single session burns expert time and produces nothing the business can use independently.

To improve on this, start building something more durable.

Instead of asking the expert to review AI outputs, you ask them to do their actual job while you document it. Take a financial planner walking a client through a trust structure setup. Walk through that scenario with them and capture everything, the questions they ask, the order they ask them, the outputs they produce.

That session becomes your reference document. When the AI goes through the same scenario, you have something concrete to compare against. Better, worse, or roughly the same.

To be clear, you would run A/B testing of this reference document against your AI generated outputs to figure out any discrepancies.

The next improvement requires infrastructure, but is a lot more scalable than the previous two modes.

Once real users are interacting with your system, you can start having your expert classify those conversations. They look at a conversation, decide whether it succeeded or failed, and write two to three sentences explaining why.

Something like: “This conversation failed. → Because the assistant moved forward without asking the client about existing trust structures first, which is always required before giving this kind of advice.”

That failure reason is valuable.

It’s domain knowledge in a form the business owns and can use without the expert in the room. Over time that library of classified conversations becomes the foundation for measuring quality without a human reviewer in the loop on every single change.

The point isn’t to cut the subject matter expert out. The point is that every session should produce an artefact your business owns.

And if not? When that expert eventually walks out the door, the quality of the system walks out with them.